OpenAI delivers GPT-4o fine-tuning

OpenAI has announced the release of fine-tuning capabilities for its GPT-4o model, a feature eagerly awaited by developers. To sweeten the deal, OpenAI is providing one million free training tokens per day for every organisation until 23rd September.

Tailoring GPT-4o using custom datasets can result in enhanced performance and reduced costs for specific applications. Fine-tuning enables granular control over the model’s responses, allowing for customisation of structure, tone, and even the ability to follow intricate, domain-specific instructions.

Developers can achieve impressive results with training datasets comprising as little as a few dozen examples. This accessibility paves the way for improvements across various domains, from complex coding challenges to nuanced creative writing.

“This is just the start,” assures OpenAI, highlighting their commitment to continuously expand model customisation options for developers.

GPT-4o fine-tuning is available immediately to all developers across all paid usage tiers. Training costs are set at 25 per million tokens, with inference priced at 3.75 per million input tokens and $15 per million output tokens.

OpenAI is also making GPT-4o mini fine-tuning accessible with two million free daily training tokens until 23rd September. To access this, select “gpt-4o-mini-2024-07-18” from the base model dropdown on the fine-tuning dashboard.

The company has collaborated with select partners to test and explore the potential of GPT-4o fine-tuning:

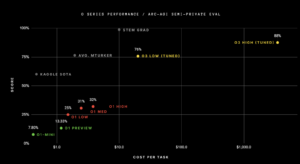

Cosine’s Genie, an AI-powered software engineering assistant, leverages a fine-tuned GPT-4o model to autonomously identify and resolve bugs, build features, and refactor code alongside human developers. By training on real-world software engineering examples, Genie has achieved a state-of-the-art score of 43.8% on the new SWE-bench Verified benchmark, marking the largest improvement ever recorded on this benchmark.

Distyl, an AI solutions provider, achieved first place on the BIRD-SQL benchmark after fine-tuning GPT-4o. This benchmark, widely regarded as the leading text-to-SQL test, saw Distyl’s model achieve an execution accuracy of 71.83%, demonstrating superior performance across demanding tasks such as query reformulation and SQL generation.

OpenAI reassures users that fine-tuned models remain entirely under their control, with complete ownership and privacy of all business data. This means no data sharing or utilisation for training other models.

Stringent safety measures have been implemented to prevent misuse of fine-tuned models. Continuous automated safety evaluations are conducted, alongside usage monitoring, to ensure adherence to OpenAI’s robust usage policies.

(Photo by Matt Artz)

See also: Primate Labs launches Geekbench AI benchmarking tool

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is co-located with other leading events including Intelligent Automation Conference, BlockX, Digital Transformation Week, and Cyber Security & Cloud Expo.

Explore other upcoming enterprise technology events and webinars powered by TechForge here.