IBM unveils Granite 3.0 AI models with open-source commitment

IBM has taken the wraps off its most sophisticated family of AI models to date, dubbed Granite 3.0, at the company’s annual TechXchange event.

The Granite 3.0 lineup includes a range of models designed for various applications:

General purpose/language: 8B and 2B variants in both Instruct and Base configurations

Safety: Guardian models in 8B and 2B sizes, designed to implement guardrails

Mixture-of-Experts: A series of models optimised for different deployment scenarios

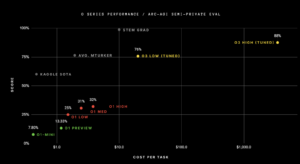

IBM claims that its new 8B and 2B language models can match or surpass the performance of similarly sized offerings from leading providers across numerous academic and industry benchmarks. These models are positioned as versatile workhorses for enterprise AI, excelling in tasks such as Retrieval Augmented Generation (RAG), classification, summarisation, and entity extraction.

A key differentiator for the Granite 3.0 family is IBM’s commitment to open-source AI. The models are released under the permissive Apache 2.0 licence, offering a unique combination of performance, flexibility, and autonomy to both enterprise clients and the broader AI community.

IBM believes that by combining a compact Granite model with proprietary enterprise data, particularly using their novel InstructLab alignment technique, businesses can achieve task-specific performance rivalling larger models at a fraction of the cost. Early proofs-of-concept suggest potential cost savings of up to 23x less than large frontier models.

According to IBM, transparency and safety remain at the forefront of its AI strategy. The company has published a technical report and responsible use guide for Granite 3.0, detailing the datasets used, data processing steps, and benchmark results. Additionally, IBM offers IP indemnity for all Granite models on its watsonx.ai platform, providing enterprises with greater confidence when integrating these models with their own data.

The Granite 3.0 8B Instruct model has shown particularly promising results, outperforming similar-sized open-source models from Meta and Mistral on standard academic benchmarks. It also leads across all measured safety dimensions on IBM’s AttaQ safety benchmark.

IBM is also introducing the Granite Guardian 3.0 models, designed to implement safety guardrails by checking user prompts and LLM responses for various risks. These models offer a comprehensive set of risk and harm detection capabilities, including unique checks for RAG-specific issues such as groundedness and context relevance.

The entire suite of Granite 3.0 models is available for download on HuggingFace, with commercial use options on IBM’s watsonx platform. IBM has also collaborated with ecosystem partners to integrate Granite models into various offerings, providing greater choice for enterprises worldwide.

As IBM continues to advance its AI portfolio, the company says it’s focusing on developing more sophisticated AI agent technologies capable of greater autonomy and complex problem-solving. This includes plans to introduce new AI agent features in IBM watsonx Orchestrate and build agent capabilities across its portfolio in 2025.

See also: Scoring AI models: Endor Labs unveils evaluation tool

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is co-located with other leading events including Intelligent Automation Conference, BlockX, Digital Transformation Week, and Cyber Security & Cloud Expo.

Explore other upcoming enterprise technology events and webinars powered by TechForge here.